Circle Loss: A Unified Perspective of Pair Similarity Optimization

Jun 1, 2020·,,,,,,·

0 min read

Yifan Sun

Changmao Cheng

Yuhan Zhang

Chi Zhang

Liang Zheng

Zhongdao Wang

Yichen Wei

Abstract

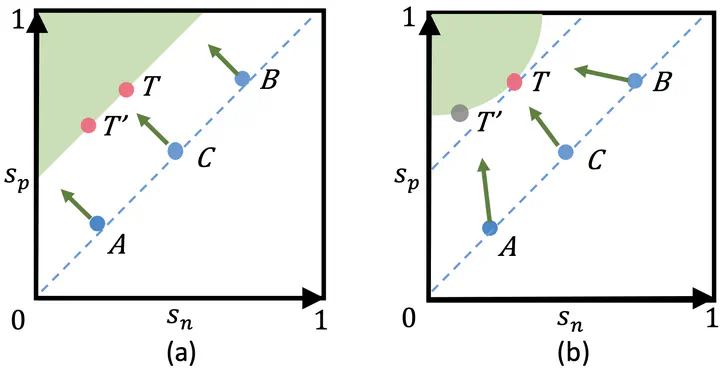

This paper provides a pair similarity optimization viewpoint on deep feature learning, aiming to maximize the within-class similarity sp and minimize the between-class similarity sn. We find a majority of loss functions, including the triplet loss and the softmax cross-entropy loss, embed sn and sp into similarity pairs and seek to reduce (sn − sp). Such an optimization manner is inflexible, because the penalty strength on every single similarity score is restricted to be equal. Our intuition is that if a similarity score deviates far from the optimum, it should be emphasized. To this end, we simply re-weight each similarity to highlight the less-optimized similarity scores. It results in a Circle loss, which is named due to its circular decision boundary. The Circle loss has a unified formula for two elemental deep feature learning paradigms, i.e., learning with class-level labels and pair-wise labels. Analytically, we show that the Circle loss offers a more flexible optimization approach towards a more definite convergence target, compared with the loss functions optimizing (sn − sp). Experimentally, we demonstrate the superiority of the Circle loss on a variety of deep feature learning tasks. On face recognition, person re-identification, as well as several finegrained image retrieval datasets, the achieved performance is on par with the state of the art.

Type

Publication

2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)